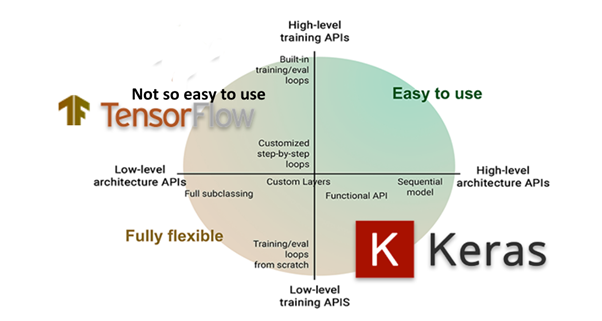

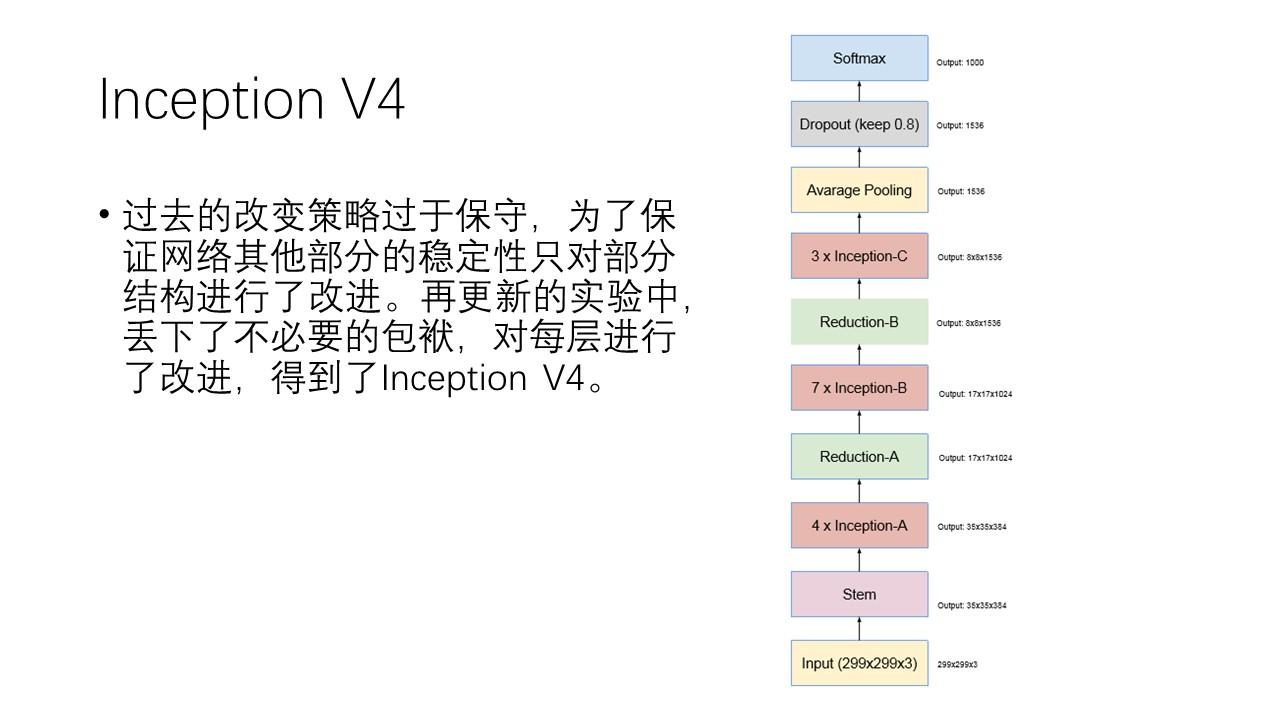

This tutorial demonstrates how to: Use models from TensorFlow Hub with tf.keras. whisker_0.4.1 compiler_4.3.1 htmlwidgets_1.6.2 TensorFlow Hub is a repository of pre-trained TensorFlow models. fastmap_1.1.1 yaml_2.3.7 lifecycle_1.0.3 GoogleNet is the first version of Inception Models, it was first proposed in the 2014 ILSVRC (ImageNet Large Scale Visual Recognition Competition) and won this competition. It works well for simple layer stacks with only one input and output tensor. However, it does not allow us to create models with numerous inputs or outputs. It allows us to develop models in a layer-by-layer fashion. Loaded via a namespace (and not attached): Keras Functional API Keras Sequential Model. Sequential class kerascore.Sequential(layersNone, trainableTrue, nameNone) Sequential groups a linear stack of layers into a Model. stats graphics grDevices utils datasets methods base LC_MEASUREMENT=en_US.UTF-8 LC_IDENTIFICATION=C

LC_MONETARY=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8 LC_TIME=en_US.UTF-8 LC_COLLATE=en_US.UTF-8 predict ( testimages ) The model has predicted the label for each image in the testing set. LAPACK: /usr/lib/x86_64-linux-gnu/openblas-pthread/libopenblasp-r0.3.20.so LAPACK version 3.10.0 Softmax ()) predictions probabilitymodel. Like this:īLAS: /usr/lib/x86_64-linux-gnu/openblas-pthread/libblas.so.3 In this case, you would simply iterate over model$layers and set layer$trainable = FALSE on each layer, except the last one. Here are two common transfer learning blueprint involving Sequential models.įirst, let’s say that you have a Sequential model, and you want to freeze all layers except the last one. If you aren’t familiar with it, make sure to read our guide to transfer learning. Transfer learning consists of freezing the bottom layers in a model and only training the top layers. x <- tf $ ones( shape( 1, 250, 250, 3)) features <- feature_extractor(x) Transfer learning with a Sequential model Initial_model % layer_conv_2d( 32, 5, strides = 2, activation = "relu") %>% layer_conv_2d( 32, 3, activation = "relu", name = "my_intermediate_layer") %>% layer_conv_2d( 32, 3, activation = "relu") feature_extractor <- keras_model( inputs = initial_model $inputs, outputs = get_layer(initial_model, name = "my_intermediate_layer") $output ) # Call feature extractor on test input. For instance, this enables you to monitor how a stack of Conv2D and MaxPooling2D layers is downsampling image feature maps: When building a new Sequential architecture, it’s useful to incrementally stack layers and print model summaries. A common debugging workflow: %>% + summary()

In general, it’s a recommended best practice to always specify the input shape of a Sequential model in advance if you know what it is. Models built with a predefined input shape like this always have weights (even before seeing any data) and always have a defined output shape.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed